We Built salty.poker with AI. Here's the Part Nobody Saw.

Twenty-two build sessions in ten days.

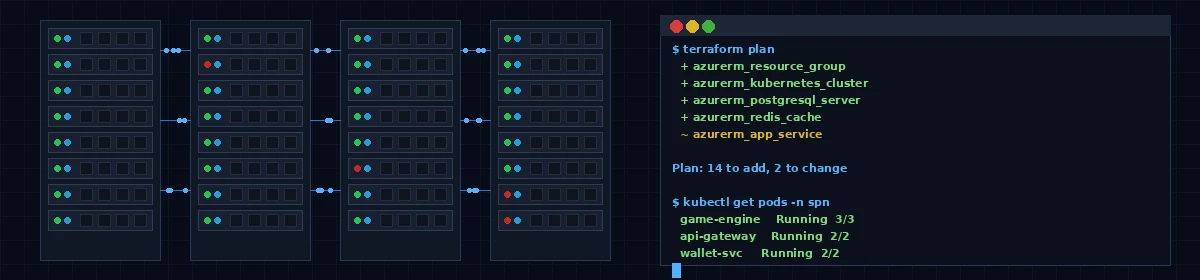

Each session a Claude Code agent working from a 100-page specification — reading the current state of the codebase, picking up the spec module assigned to it, writing code, wiring services, running migrations, committing. No estimation meetings. No scope creep conversations. No Jira tickets debated in a conference room.

The output of those sessions resulted in 20 microservices, a real-time table engine, tournament management, a double-entry ledger, KYC verification, fraud detection, and a PixiJS-rendered poker table with animated cards and chip stacks.

The changelog covers what shipped. This post is about what happened next.

What Actually Happens After the Code Is Written

Writing correct code and having a working system are two different things. The code passes its unit tests. The services behave correctly in isolation. But when you connect everything — web app, table engine, real-time connections, authentication, database — the surface area for failure grows faster than any test suite can fully anticipate.

This past week was that phase. End-to-end testing. Real logins, real lobby, real tables, real players sitting down. Everything that breaks, breaks here.

The table view loaded and showed an empty canvas where seats should be. No buy-in dialog. No way back to the lobby. A blank screen where a poker game was supposed to be.

The lobby had a table card that stretched to fill the entire page height. One CSS default, one line that nobody wrote intentionally — it is just how CSS Grid behaves when there is only one item in the row and you have not overridden its stretch behavior.

Neither of these bugs was written by a human. An AI session wrote the table view. An AI session wrote the lobby. They were still bugs. They still needed to be found, traced to their root cause, and fixed.

Why This Is Still the Better Path

Before the how-it-broke story: the fact that we are in end-to-end testing in ten days is the story.

Two prior companies taught me how long this takes the traditional way. Months of scaffolding before you have something to test. Months more before you are testing something that resembles the final product. The gap between first commit and end-to-end testing phase is usually where projects lose momentum, scope, and budget.

We closed that gap in ten days. The testing phase is the hard part. We are in it.

What Is Coming in This Series

This is post one of four. Over the next week, we are going to walk through what we actually found during testing — a routing bug that was invisible until the full stack ran, a dev environment that was silently ignoring code changes, and an auth guard that sent every navigation attempt into a redirect loop.

Each one traces to a root cause that was findable. None of them were obvious until you ran the thing end-to-end with a real browser.

The final post covers what integration testing looks like when AI wrote most of the code — and why it looks almost identical to integration testing when humans wrote it.

For the deeper methodology — how the spec is structured, how sessions are scoped, what the agent actually does during a build — I write about it over at The Salty Korean.

Stay salty.

The Salty Korean

Founder of the Salty Poker Network. Writing about Texas poker, platform building, and the future of online poker. Read more at The Salty Korean.