None of Those Fixes Worked After Refreshing the Page

The routes were fixed. The state endpoint was rewritten. The data format was corrected. Every change was confirmed in the repository, visible in the file, exactly as intended.

Refreshed the browser. Nothing changed.

Same broken table. Same blank canvas.

What Was Actually Happening

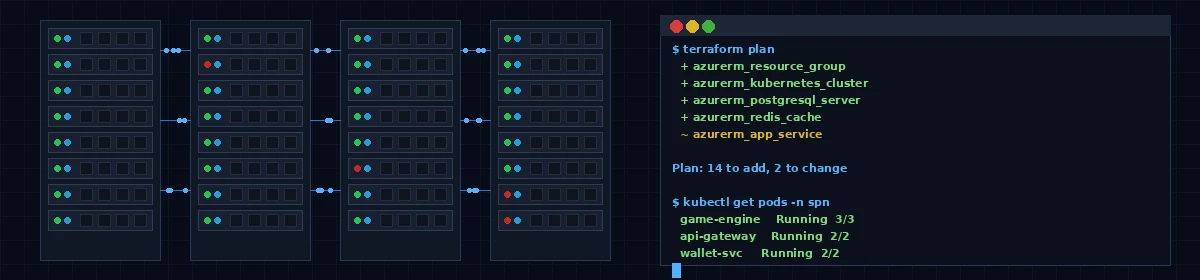

The platform runs locally in Docker — a set of containers, each running a service, connected through a shared configuration. The front-end web app is volume-mounted: source files on disk are mapped directly into the container. Vite, the build tool, watches for file changes and automatically reloads the browser.

Except it was not watching.

On Windows with Docker running over WSL2 — the Linux compatibility layer that Windows uses for containers — the filesystem event notifications that Vite relies on to detect changes do not propagate correctly across the virtualization boundary. Linux’s notification system reports the change. Windows does not forward it. Vite never sees the file update. The browser keeps serving the code that was there before.

The fix was one line in the Vite configuration: usePolling: true. Instead of waiting to be notified of changes, Vite checks for them every 300 milliseconds. It is slightly less efficient. It works.

The table engine — the backend service that manages the actual game state — had its own version of the same problem. Backend services in this stack do not run with watch mode. Code changes require restarting the container manually. Standard behavior for a production-grade service. You just have to know to restart it.

The Other Thing That Was Wrong

Once the environment issues were resolved, a third bug surfaced: the table route had an auth guard that always sent the browser to the login page.

Not because the user was not logged in. They were. The guard was checking a TanStack Router context value that was never populated — the router was configured without a context provider, so context.auth was always undefined. The guard read “not authenticated,” redirected to login. Login saw an authenticated session, redirected back to the lobby. The browser looped.

One line changed. The guard was updated to read directly from the authentication store, the same pattern every other route in the application uses. Fixed.

What This Requires of the Person at the Keyboard

The fixes were correct. The system was not picking them up. That observation — that correct code and running code are two different things — required knowing the development environment well enough to ask the right question.

The agent can write the fix. It can trace the bug once you describe it. It cannot notice that the browser is serving a cached version of the code from before the configuration change. That observation is human.

Knowing the system well enough to diagnose why a correct fix is not taking effect — that is the part that does not get automated.

After the polling configuration, after the container restarts, after the auth guard correction: the table loaded. Seats appeared. The buy-in dialog rendered. The lobby cards were the right height. Everything worked.

The next post covers what integration testing looked like once we had a working system to test against — and why that phase looks the same regardless of whether AI or humans wrote the code.

For the founder’s view on navigating this kind of debugging process — what the human-in-the-loop role actually demands when AI wrote most of the codebase — it is over at The Salty Korean.

Stay salty.

The Salty Korean

Founder of the Salty Poker Network. Writing about Texas poker, platform building, and the future of online poker. Read more at The Salty Korean.